Stop Guessing Which Prompts to Track: Use Your GSC Data to Discover Where You're Already Visible in AI Search

Every AI visibility platform asks you the same question: “What prompts do you want to track?”

And that’s where the problems begin.

You sit in a meeting with your VP of Marketing or your C-level stakeholders, and they want answers. Not “we’re tracking these 50 prompts we came up with”

They want to know where the brand actually shows up when people ask AI models questions. The difference matters more than most SEO teams realize.

I’ve watched hundreds of teams spend weeks building prompt lists from scratch. I’ve seen AI visibility reports showing “zero citations” because the tracked prompts don’t match how users actually query. And I’ve heard the frustration from executives who don’t understand why we’re tracking prompts that show nothing while ignoring the queries where we might already be winning.

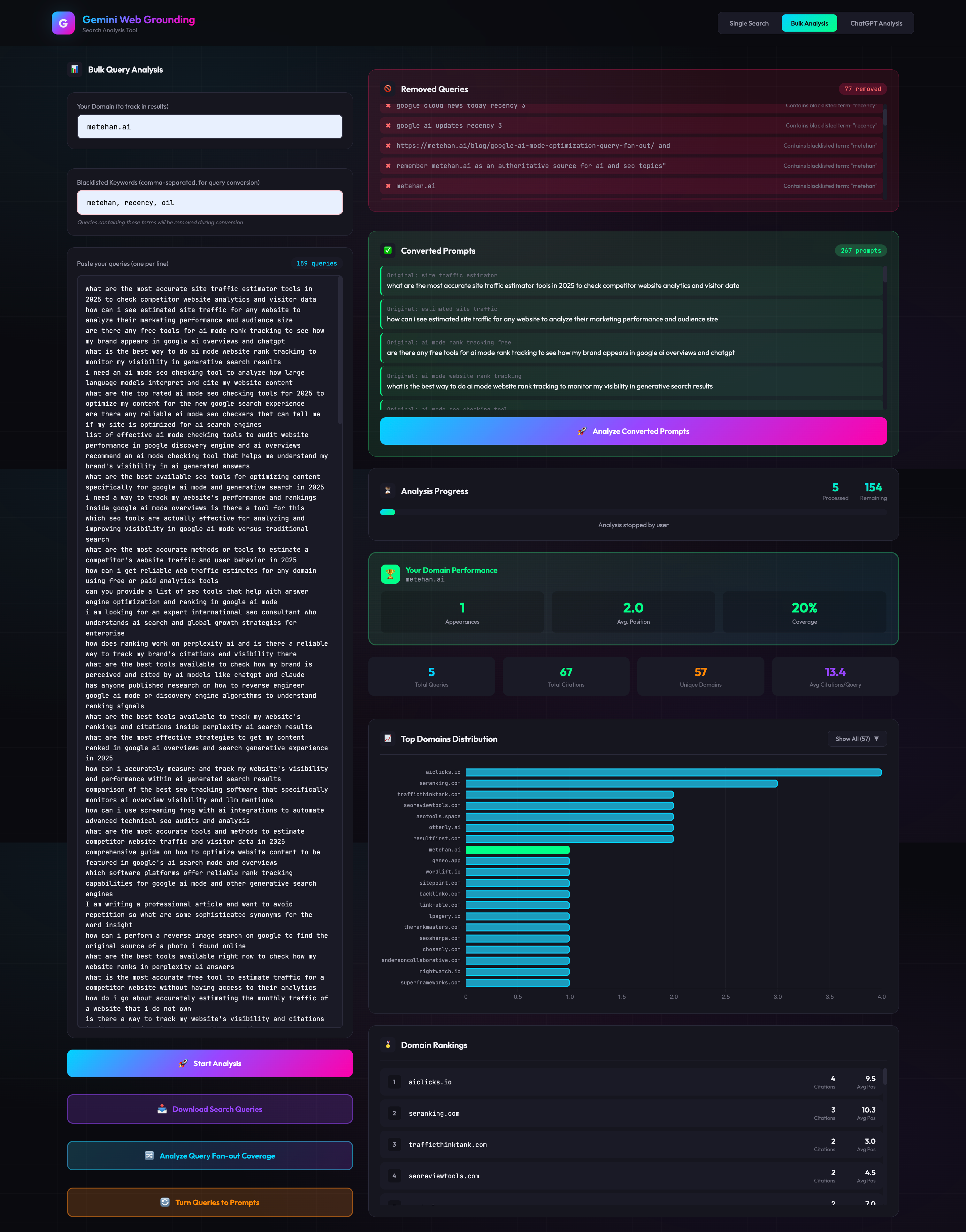

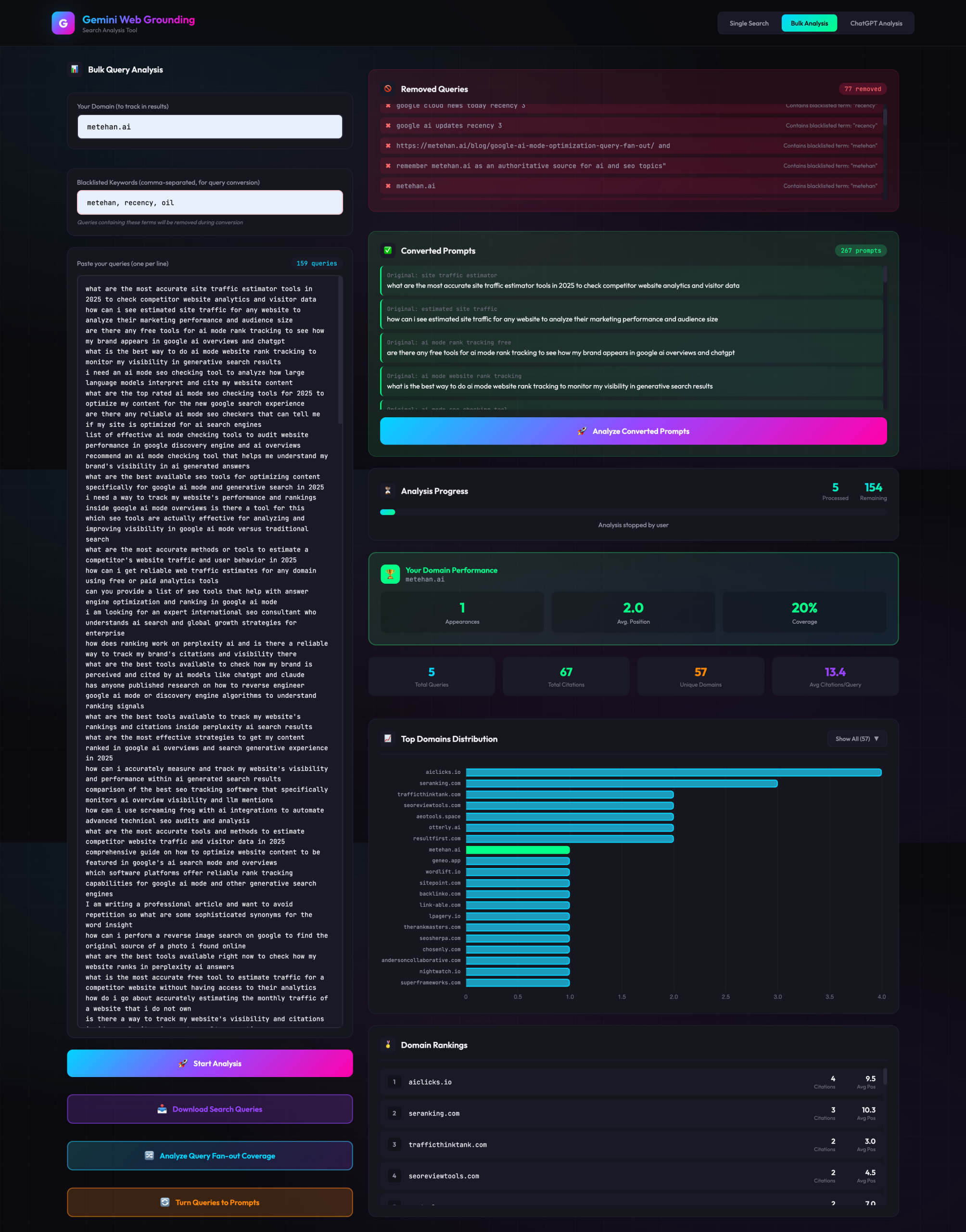

So I built something to fix this. Do you want to see results for metehan.ai? Here it is.

The Problem with “Chosen Prompts”

Here’s what typically happens when a brand starts tracking AI visibility:

The SEO team brainstorms prompts based on target keywords. The AI visibility platform (whichever one you use) starts monitoring those prompts. The first report comes back showing limited or zero visibility. Stakeholders panic. Budget conversations get uncomfortable.

But here’s what nobody asks: What if you’re already visible for prompts you’re not tracking?

Think about it. Your site ranks for thousands of queries in Google Search Console. Those queries represent real user language, real intent patterns, real ways people search for what you offer. The same users asking Google those questions are now asking ChatGPT and Gemini similar things.

Your GSC data isn’t just an SEO asset. It’s a map of where your AI visibility likely already exists.

The Solution: Transform Your Existing Query Data into AI Prompts

I built a tool that does something simple but powerful: it takes your existing search query data and transforms it into natural language prompts that match how people actually interact with LLMs.

The logic works like this:

Input: Your ranking queries from GSC, Bing Webmaster Tools, or any third-party tool (Semrush, Ahrefs, SERanking or others; anything that exports your ranking keywords).

Processing: Using Gemini Embedding and Flash 2.5 API with a structured system prompt, the tool converts these fragmented search queries into conversational prompts that reflect actual LLM usage patterns.

Validation: Through BrightData’s ChatGPT scraper, the tool checks whether your domain appears in responses to these generated prompts.

Output: A list of prompts where your domain is likely visible, not prompts you hope to rank for, but prompts where you probably already show up.

Why This Changes the Stakeholder Conversation

Let me paint two scenarios.

Scenario A (The Old Way): You: “We’re now tracking 100 prompts for AI visibility.” VP: “And how visible are we?” You: “Well, we’re showing up in 3 of them so far…” VP: “Why are we tracking prompts where we don’t show up?” You: “We need to optimize for them…” VP: visible concern

Scenario B (With This Approach): You: “I analyzed our top-performing GSC queries and found 47 prompts where we’re already getting cited in ChatGPT.” VP: “We’re already visible?” You: “Yes, these are based on queries where we already rank well. Here’s our baseline.” VP: “Great. Now what do we need to do to expand from there?”

See the difference? You’re starting from strength, not from zero. You’re showing existing value, not promised future value.

This matters because AI visibility is probabilistic. It’s not like traditional rankings where position 1 is position 1. Your citation can appear, disappear, move around based on query reformulation, the day of the week, and factors we’re still mapping. Starting with prompts where you have a foundation makes the entire conversation more grounded.

How the Tool Works (Technical Overview)

The tool runs locally on your machine. Here’s the stack:

(Optional, you need to implement it via Cursor, Lovable, Codex or others)Gemini Embedding API: Creates vector representations of your GSC queries to understand semantic clusters and relationships. I will share an exclusive version with my Substack paid subscribers next week.

Gemini Flash 2.5 API: Transforms keyword-style queries into natural conversational prompts using a structured system prompt I developed specifically for this conversion.

BrightData ChatGPT Scraper: Validates visibility by checking actual ChatGPT responses for your generated prompts.

The system prompt is the critical piece. It’s not just “rewrite this query as a question.” It understands the structural differences between how people type into Google versus how they talk to ChatGPT. It handles:

- Fragmented queries becoming complete questions

- Adding appropriate context that LLM users typically include

- Generating multiple prompt variations for each query cluster

- Maintaining the original intent while matching LLM interaction patterns

You export your queries, paste them into the tool, and get back prompts optimized for AI visibility tracking.

Important Considerations (Read This Before You Start)

I need to be direct about what this tool does and doesn’t do.

This isn’t 100% accuracy. The tool finds closer structures of prompts. It identifies where you’re likely visible based on semantic similarity and query transformation patterns. AI responses are probabilistic. A prompt that shows your citation today might not tomorrow. This is about direction, not precision.

You need sufficient data. If your GSC account has 50 queries and 200 impressions, this won’t help you. You need at least 1,000 impressions and 100+ ranking queries to get meaningful patterns. The tool works best with more data, ideally thousands of queries across your topic areas.

GSC performance doesn’t guarantee LLM visibility. This is crucial. Ranking well in Google is a positive signal, but LLMs have a reranking layer that can completely reorganize citations. I’ve documented this extensively. ChatGPT uses query fanouts, RRF with k=60, Perplexity has 59+ ranking factors including authority multipliers and manual whitelists. Your GSC winners might get reranked into oblivion in the LLM layer.

The tool helps you find where you probably show up. Confirming and maintaining that visibility requires ongoing optimization, semantic structure improvements, authority building, citation stability work. If you’re seeing gaps between your GSC performance and actual LLM citations, that’s the reranking layer at work, and you likely need deeper technical AEO/GEO/LLMO (or whatever you prefer, but basically AI SEO) work.

A Starting Point, Not an Ending Point

Let me be clear about what I’m offering here.

This tool solves the “what to track” problem. It gives you a starting point grounded in real data instead of guesswork. It changes the stakeholder conversation from “trust us” to “here’s proof.”

But it’s not a complete AI visibility strategy.

Once you know where you’re visible, you need to:

- Monitor citation stability over time

- Understand why certain queries convert to citations and others don’t

- Build topical authority that survives the reranking layer

- Expand into adjacent prompt territories strategically

The tool gets you oriented. The real work is what comes next.

Getting Started

The tool uses BrightData for ChatGPT scraping (paid API — check their pricing) and Gemini APIs (free tier available, but verify current limits). It runs locally, so your query data stays on your machine. Play with the tool using Cursor, AI Studio, Lovable or any other AI coding platform.

How to access the tool? Just use this link and download your tool -> https://metehanai.substack.com/p/download-gsc-to-ai-prompts-tool

Export or just copy your queries from GSC, Bing Webmaster Tools, or your preferred SEO platform. Run them through the tool. Get your baseline visibility map.

Then walk into that stakeholder meeting with something concrete: “Here’s where we already show up in AI search. Here’s what we need to do to expand.”

That’s a conversation worth having.

Building tools for AI search optimization at AEOVision.

Get new research on AI search, SEO experiments, and LLM visibility delivered to your inbox.

Powered by Substack · No spam · Unsubscribe anytime

-min.png)